Why AI Safety Is More Than Just a Technical Issue

Artificial Intelligence (AI) is now a core part of how things get done. It’s speeding up processes, making healthcare more precise, and personalizing our experiences from education to entertainment. However, as AI systems become more embedded in daily life, their impact extends beyond convenience—they influence how we consume information and make key decisions that shape our lives.

AI models—especially Large Language Models (LLMs)—are trained on vast datasets that specifically shape the information people access. Without proper oversight, they can reinforce harmful stereotypes, spread misinformation, and even impact political stability. AI safety isn’t just a technical issue; it’s a societal one, affecting trust, governance, and the public good.

The Core Issues in AI Safety

1. Bias in AI Models

AI models are only as good as the data they’re trained on. If the data contains racial, gender-based, or socio-economic biases, AI outputs will reflect and amplify those biases. For example:

- Reinforce racial and ethnic biases, including harmful stereotypes (e.g., associating Black individuals with criminality, portraying women solely in domestic roles, or linking certain ethnic groups with lower intelligence).

- Produce gender-biased responses that depict women in traditional caregiving roles while associating men with leadership.

- Favor dominant political narratives while suppressing minority perspectives.

- Misinterpret multilingual contexts, leading to insensitive or inaccurate responses (e.g., translating a phrase in one harmless language to a very offensive phrase in another language or failing to recognize regional dialects).

- Exhibit cultural insensitivity by misrepresenting non-Western cultures, failing to account for local contexts, and generating responses that conflict with local values and traditions.

- Socio-economic bias can favor wealthier regions while underrepresenting marginalized communities (e.g., AI-driven loan applications disproportionately denying credit to applicants from low-income neighborhoods).

AI models trained primarily on English-language sources often reflect Western-centric viewpoints, limiting access to balanced, locally relevant information for users in non-Western regions. In multicultural societies, bias can deepen divides rather than bridge them, making training AI with diverse and representative datasets essential.

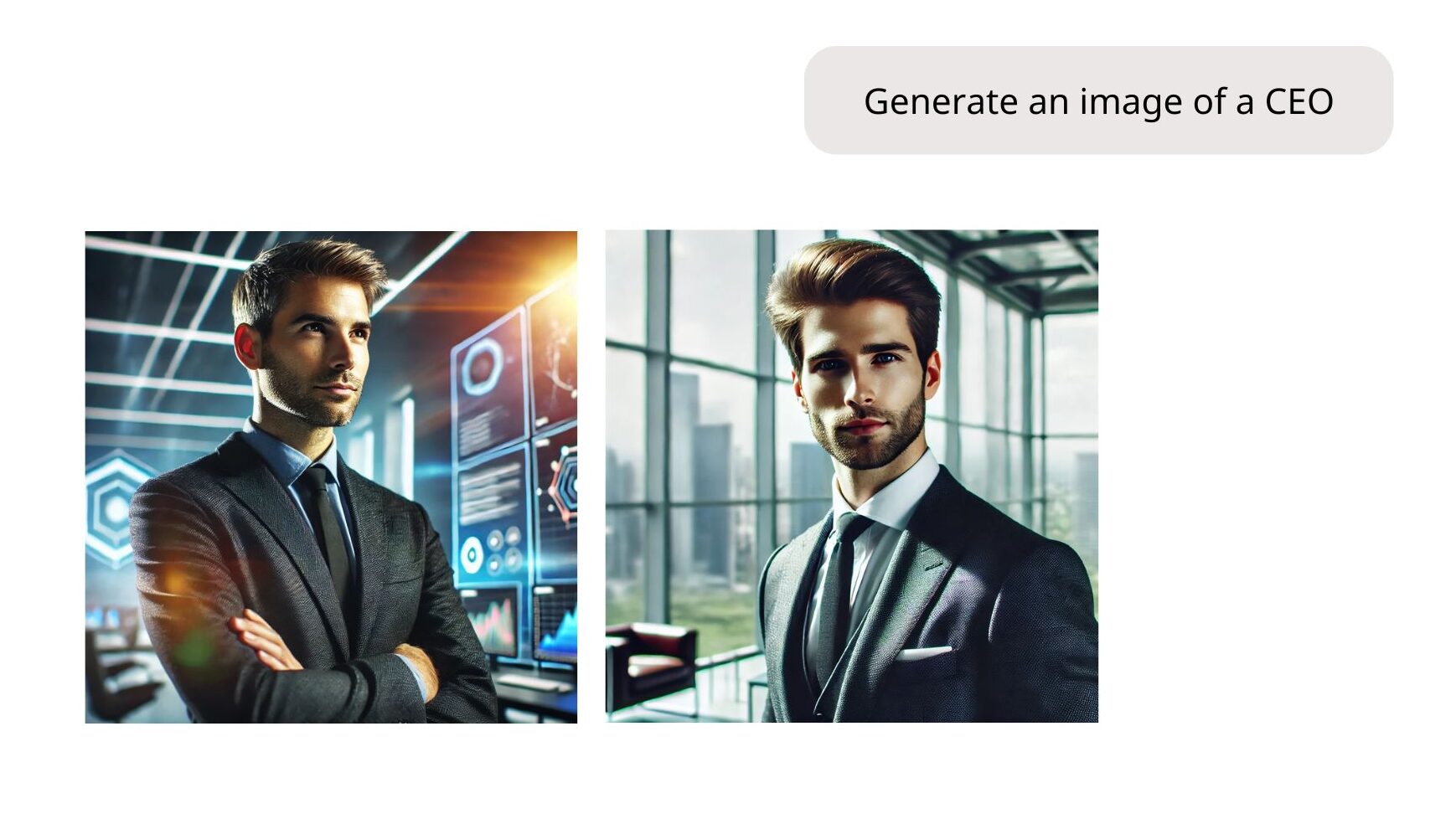

Fig 1: DALL-E 2 (OpenAI) exhibits racial bias by generating mostly images of white CEOs, demonstrating the model’s reflection of societal stereotypes. [Source: OpenAI]

2. Misinformation, Disinformation, and Sociopolitical Instability

AI-powered tools are increasingly used to generate and spread content. However, not all AI-generated content is factual or unbiased. If not carefully managed, AI systems can:

- Amplify misinformation and disinformation: AI-generated fake news, propaganda, and deepfakes can spread rapidly across social media, manipulating public opinion, influencing elections, and inciting violence.

- Create echo chambers: AI-powered recommendations can reinforce existing biases and beliefs by pushing politically charged or misleading content, hindering critical thinking and informed decision-making.

- Fuel political polarization: By tailoring content to individual preferences, AI can deepen existing political divides and contribute to increased societal divides.

- Threaten social harmony: AI-generated content can inflame religious, racial, or geopolitical tensions, potentially leading to social unrest and conflict.

- Enable targeted manipulation: AI can create very specific disinformation campaigns that target the weaknesses of certain groups, potentially changing election results or causing social unrest.

- Automate disinformation: AI can automate the creation of fake social media accounts, bots, and coordinated disinformation campaigns, making it difficult to discern authentic voices from manipulated narratives.

The speed and scale at which AI can generate and distribute persuasive content seriously threaten social and political stability. Ensuring AI can detect and flag false information while mitigating its potential to amplify existing biases and manipulate public opinion is crucial to preventing real-world harm.

Fig 2: Image created with AI, depicting Donald Trump carrying cats away from Haitian immigrants. [Credit: Rebecca Noble/AFP via Al Jazeera]

3. Ethical Data Collection & Transparency

AI safety isn’t just about output—it’s also about how models are built. Ethical concerns include:

- Data privacy: Users should have control over how their data is used by AI. This includes knowing how their conversations are stored, preventing the creation of exploitative profiles, and protecting against unauthorized access. Children’s data, especially, requires special protection.

- Consent: AI should not learn from personal data without explicit permission, especially sensitive information or data from vulnerable groups. Users need clear ways to opt out of data collection.

- Transparency: Many AI systems lack transparency. They often operate as “black boxes,” making understanding why they produce certain responses difficult. This lack of explainability can lead to mistrust and hinder our ability to identify and address biases or errors.

Developing transparent and accountable AI systems while respecting data privacy and user consent is critical to maintaining public trust and upholding human rights. Without careful attention to these ethical considerations, AI systems can reinforce existing power imbalances and perpetuate social injustices.

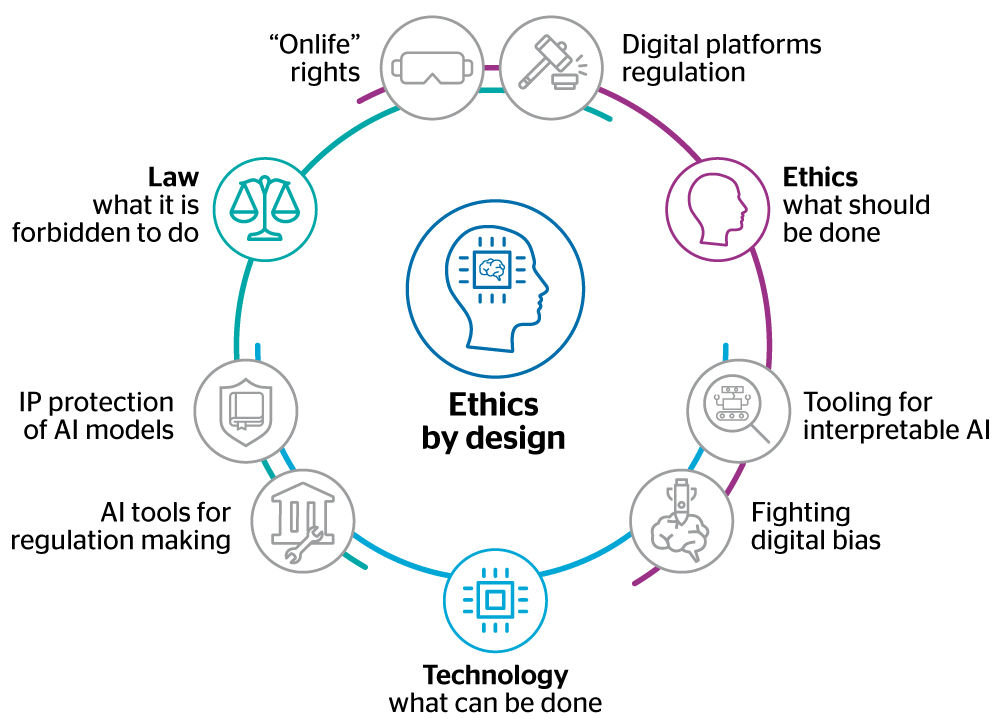

Fig 3: AI ethics by design. [Source: Atos, 2019]

The ‘AI ethics by design’ diagram illustrates the interconnectedness of ethical considerations, including ‘Law,’ ‘Transparency,’ and ‘Bias Fighting.’ This visual representation aligns with the section’s emphasis on data privacy, consent, and the challenge of ‘black box’ systems.

How to Make AI Safer

Making AI safer requires a multi-pronged approach that combines technical solutions, ethical guidelines, and robust governance. Here’s how we can work towards that goal:

- Transparency is Key: AI development must be transparent. This means disclosing training data, explaining how AI models work, and actively mitigating biases. Transparency allows scrutiny, auditing, and correction of AI systems.

- Test & Challenge AI: Rigorous testing is crucial. Continuous “Red teaming” exercises, where experts try to find weaknesses and biases in AI systems, should be conducted. This is important for multilingual AI, where biases can manifest differently across languages.

- Ethical Guidelines & Regulations: Governments and organizations must establish clear AI rules. These rules should prevent AI from spreading misinformation, inciting hate speech or violence, and perpetuating biases in areas like hiring, policing, healthcare, and education.

- Collaboration for a Safer Future: Ensuring AI safety is an ongoing process. Developers, researchers, governments, regulators, and communities must all work together to create AI that serves humanity responsibly, ethically, and fairly. This includes building safer models, enforcing ethical policies, and providing feedback on AI’s behavior.

By embracing these strategies and fostering a culture of collaboration and accountability, we can harness AI’s immense potential while mitigating its risks, ensuring a future where AI benefits humanity.

Final Thoughts: Building Trust in AI

AI safety isn’t just about preventing harm—it’s about ensuring AI works for everyone. Whether eliminating bias, fighting misinformation, or protecting privacy, AI developers, policymakers, and users must work together to build ethical, fair, and inclusive AI systems.

By addressing these issues now, we can shape AI as a force for good—rather than a tool that deepens existing inequalities.

💡SUPA offers expert LLM evaluation and solutions to help you build ethical and trustworthy AI. Contact us at isaac@supa.so or visit our website.